15 of the Most Evil People in History

The path of history has never been one that bends to fairness or morality. Everyone in history chooses how they wish to treat others and what kind of person they wish to be. And while there is plenty of evidence that most of us are good, it’s a sad truth that the evil people, the truly terrible ones, are often able to have great influence and cause great suffering as a result of their dark ambitions and disregard or even hatred for the rest of us. And a handful of these people can even change the course of history by being the most evil people that have ever lived.

Pol Pot

Pol Pot was the leader of Cambodia’s Khmer Rouge party in the late 1970s. The party was formed by radical communist who were staunchly against the idea of the free market and even most forms of social infrastructure as we understand them like banks, medicine and even money. Instead, it was their belief they could create a perfect society by essentially abolishing everyone’s normal way of life and forcing them into a new one.

They rose to power in the wake of the Vietnam War, having allied themselves with the Viet Cong and fought against the United States and Cambodian forces during the war. The US dropped over 500,000 tons of bombs on Cambodia, further cementing the Khmer Rouge beliefs in an anti-American approach to taking control.

After the war, the Khmer Rouge had taken control of much of the country. Cambodian citizens were forced from their homes and made to work on farms and in other industries deemed necessary for the greater good of the whole society. The new regime seized all assets. Children were removed from parents, behavior was strictly regimented included what you could wear and even how you could speak.

The idea was that no one would be better than anyone, class would be removed from the equation and everyone would do equal work for the entire society. But, keep in mind, none of these citizens agreed to it. It was only by the actions of Pol Pot and the Khmer Rouge that this came to pass, forcing people to work or suffer the consequences.

The poorly planned society suffered quickly and dramatically as a result of the actions of the Khmer Rouge. The result came to be known as the Cambodian Genocide.

One of the major issues from the get go was that the workers forced onto farms were not true farmers and therefore had no idea how to work the land. The farms meant to feed the population could not produce enough food to meet demands. A famine began to spread very soon after the plan was implemented.

Because the Khmer Rouge believed that Cambodia needed to be self-sustaining, it did not bring in any aid from outside. This included medicine. People with easily treatable conditions suffered and died because the government refused to make use of anything not already available in Cambodia.

The regime’s rules were ironclad, and violations were punished by death. Countless citizens were executed for something as small as harvesting wild crops or speaking ill of the regime. Being a different ethnicity like Chinese, even if only partially, could result in execution. Being from a wealthy family, being too highly educated, all manner of things were perceived as threats that merited execution. They would even kill those they suspected of being against them without proof. There were no trials for any of this. So many people were killed that their bodies were dumped in mass graves called the Killing Fields. Some were sent to camps to be tortured first.

It’s believed between 1.5 million and 3 million citizens died. Pol Pot was removed from power and for a time ran guerilla operations in the jungles. Eventually he was captured but allowed to live out his life under house arrest. He died in 1998 of heart failure.

Leopold II of Belgium

One of the lesser known monsters of history, Leopold II of Belgium was born in 1835. In 1885 he declared himself the ruler of what he called the Congo Free State but what is currently known as the Democratic Republic of Congo. There was no process by which he even came to decide he should be the ruler and certainly no one in the country had agreed to it but the other countries of Europe and the United States agreed to it and so, as far as they were all concerned, it simply was. He was not the ruler of the land; he was its owner.

He had sent explorer William Morton Stanley into the country to set up a small amount of infrastructure and to dupe local chieftains into signing contracts that they didn’t understand. Sometimes Leopold would even doctor the documents after the fact to make them even more beneficial to himself.

Leopold ran the Congo Free State like it was simply a bank. He exploited its people and its resources, taking as much wealth as he could in the form of poached ivory with the aid of a mercenary force he had hired to go in and basically gut the land.

When the price of rubber began to rise, Leopold realized he could harvest and process natural rubber in the Congo so he forced the inhabitants into slavery to accomplish this for him. His private army would raid villages and take all the women hostage. The men would be forced to harvest rubber from wild vines and meet monthly quotas. The more rubber they harvested, the harder it was to find more and the longer they would have to wander the jungles while wives and daughters were held captive.

Many of the female captives starved to death. Men ended up working themselves to death and many thousands, maybe even hundreds of thousands, fled their homes to escape Leopold’s forces. After a failed rebellion, tens of thousands more were executed.

Leopold had issued a rule for his private army in the face of rebels. They needed to account for all bullets fired so, to prove they were not wasted, soldiers needed to show an officer a severed hand for every bullet they fired. It’s said that they would return from scouring the jungles with baskets full of hands. And if anyone ever did miss or choose to shoot game, they needed to find a living victim from which they could take a hand.

Between 1880 and 1920 it’s estimated that the Congolese population dropped from 20 million to 10 million. Famine due to no one being able to work the land and harvest food, disease and low birth rate as a result of families being torn apart ensured the population was destroyed.

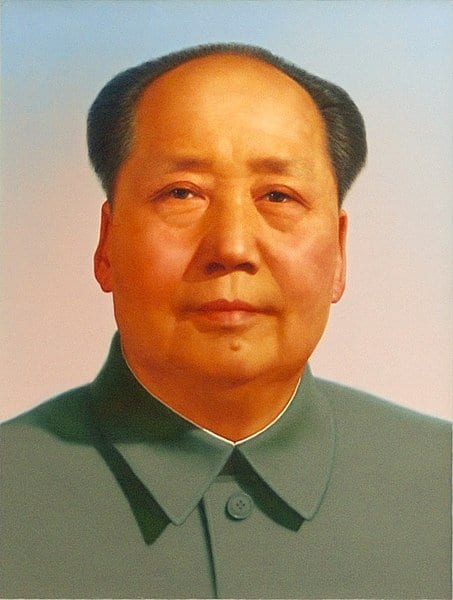

Mao Zedong

Mao Zedong was chairman of the Communist Party of China and has been credited with being the single worst mass murderer in human history with a death toll that may be as high as 45 million.

Zedong’s father was a farmer and grain dealer and he was expected to work on his family’s farm after receiving a rudimentary education. He rebelled against this and left home at 13, enrolling in another school to further his education before going to secondary school. While there, an anti-imperial revolution swept across the nation and his interest in Chinese nationalism grew, along with an interest in Marxist-Leninist philosophy.

He briefly served in the military and was at Peking University during the May Fourth Movement, when massive student protests against the presiding government were taken up after the Treaty of Versailles allowed Japan to retain lands in China instead of giving them back. This movement spurned a notable return to Chinese nationalism amongst many citizens and a rejection of what was considered Western liberalism. It set the stage for the rise of the Communist Party in China.

During the 1920s, Zedong became heavily involved in the growing Communist party, taking on more responsibilities as the philosophy spread and attracted more support. He saw the potential strength inherent in the peasant class in terms of what it could mean for a revolutionary movement. He set up training for peasant movements and also spearheaded propaganda for the Communist party.

Rifts in the party began to arise as the more traditional Communists had no regard for the peasant class and looked down upon them. In time, Mao would be kicked out, but he still held the support of his peasant armies and some of the Red Army.

His rise to power was by no means swift and the campaign spanned many years. By 1949 he was officially recognized as the party chairman and his policies were enacted with ruthless efficiency. One landlord in every village was selected to be publicly executed during a land reform movement meant to redistribute land evenly. The US State Department estimated that close to 2 million people died even before this policy was enacted. After, numbers rose as high as 5 million, plus additional millions who were sent to reform camps never to return.

Mao instituted anti-corruption campaigns but also anti-capitalism campaigns. These persecuted business people in large cities and resulted in hundreds of thousands of additional deaths, many of them the result of suicides as people sought to escape further punishment. It was said that in cities like Shanghai, so many people were jumping to their deaths off of tall buildings that no one walked on the sidewalks near them to avoid being hit.

Another policy was enacted to show Mao’s willingness to listen to counter opinions about how the country should be run. People offered criticisms of what was happening and, shortly thereafter, Mao reversed the policy and executed everyone who had spoken out, killing around 500,000 people.

In 1958, Mao introduced the Massive Leap Forward. This was meant to emulate the Soviet Union and shift China from agrarian to industrial. Farmlands were merged into huge communes and many farmers were redirected to work at industrial jobs like steel manufacturing. The plan was to make China a manufacturing superpower ahead of the rest of the world. People’s homes and possessions were seized, and the government took control of every aspect of their lives. Food was doled out in small amounts based on what was deemed to have earned it and to incentivize harder work.

Farms were left to wither and die while industry became the chief goal. A huge famine was soon to follow and estimates place the number of dead in the tens of millions. Based on numbers from the party itself, Mao likely caused 45 million deaths in just four years. Many died of hunger, others were tortured and executed for almost any perceived crime. In one case, a boy who stole a small amount of grain was buried alive and his father was forced to do it.

Zedong died at age 82 while still in power.

Adolf Hitler

No figure in history is more closely associated with pure evil than Adolf Hitler, the leader of Germany’s Nazi party. Under his rule, lasting from 1933 until Hitler’s death in 1945, he led the German forces in the Second World War. Casualty estimates for the war range from 35 million to as many as 85 million who may have died either directly or famine and disease in the wake of the war. This included about 5.8 million Polish citizens, 27 million Russians, 20 million Chinese, 3.1 million Japanese, 5.3 million Germans, 3 million Indians, 450,000 English and 420,000 Americans.

After serving in World War I, Hitler turned to politics and found a home with the German Workers Party which would soon change its name and become what history remembers as the Nazi party. Hitler was in charge of propaganda and quickly rose through the ranks, using the anger and resentment that was building in Germany after their WWI loss to strengthen his position and the party’s.

Hitler proved to be better at organizing the Nazi party than any of its other higher ranker officials and soon his will would overcome the others, despite misgivings that some may have had early on. He was able to captivate their followers and rally them and he exploited this to become the ruler of the party.

Once in control and able to spread his word through the party newspaper as well as in public forums, he was able to grow more and more influential until his failed attempt to overthrow the government.

After being arrested, his fame only grew. He wrote his book Mein Kampf while in prison and it laid out his political ideologies, which included a belief that races should not mix and that the Aryan race stood above all others. The German people, the Volk, should be the rulers of all in Hitler’s opinion. And their greatest enemies included Marxism and, more than anyone else, the Jewish people.

Once Hitler was appointed chancellor in 1933, Germany fell under total Nazi rule as the chancellorship and presidency were merged into one office. His goals were to expand Germany territory and put all the people in his way under the role of what he deemed the master race.

Hitler’s policies left no room for mercy. His early plans involved expelling Jews as well as other races and groups like Gypsies, homosexuals and more. Then it changed to execution and murder. As many as six million Jewish citizens were rounded up by Nazis and sent to concentration camps where they were exterminated en masse.

Hitler was never held accountable for his crimes and, in 1945, he committed suicide in a bunker in order to evade capture.

Heinrich Himmler

There are countless Nazi officials who served below Hitler that deserve their own infamy for the terrible things they did during the Second World War. Heinrich Himmler is a standout among them for being the leader of the SS and the man chiefly held responsible for the Nazi “Final Solution.” He was the second most powerful Nazi and the man who ultimately orchestrated the Holocaust.

As a child and young man, Himmler wanted to join the army to fight in World War 1 but the conflict ended before he was able. Because of post-war treaties he had, at the time, no hope of joining the army and instead went to school to study agriculture. However, he was also becoming increasingly interested in hard right nationalist, racist politics at the time as well. He soon rose through the ranks in the newly forming Nazi party, working alongside men like Ernst Rohm and Adolph Hitler.

Himmler worked as an aide to the head of Nazi propaganda and would write speeches for the Reich. His writing frequently stressed German nationalism and in particular the strength and priority of the “German race.” He also was prone to calling out those who considered enemies, in particular the Jewish people.

By 1929, Hitler had appointed Himmler as the leader of the SS which, at the time, was a force of under 300 men. The SS was at that time little more than private security for high-ranking officials. They even sold newspaper subscriptions for the party paper. Within four years, Himmler had grown the SS to 52,000 men. They were still responsible for security among officials but their secondary goal was related to Himmler’s own, and by extension the Nazi Party’s own political ends. To secure racial dominance and perceived purity by eliminating their enemies.

By the end of 1934, Himmler controlled all police in Germany and established the Gestapo. The SS would also be in control of the concentration camps. Hitler officially placed Himmler and the SS outside of the organizational structure of the German government, making him and the SS independent and essentially untouchable under German law. The only authority Himmler had to answer to was Hitler himself. With no need to even pretend to follow established laws or rules. Himmler and the SS were free to imprison and murder as they saw fit. This led to the murder of some two million Jews and other perceived enemies of the Nazi party even before the war began.

By 1939, after Germany had attacked Poland, Himmler was given full authority to determine who qualified as German and who did not. In other words, he was personally allowed to determine who would live and who would die. Part of this included deciding where true Germans would be allowed to settle and who would be killed to make room for them. This began in Poland and then expanded into the Soviet Union. Throughout the Soviet territory, millions of Jewish citizens were summarily executed at mass graves, sometimes after being forced to dig them for themselves. In just one instance, at a place called Babi Yar in Kyiv, nearly 34,000 Jews were shot and left in a mass grave. About 1.5 million Jews died in Soviet territories as a result of SS executions.

As the Nazi army made their way through an area, divisions of the SS would follow behind and eradicate Soviets, Roma, the disabled and Jews in the newly occupied lands. The SS had been given authority, by Hitler, to implement their “Final Solution,” the euphemism known to be used by Himmler and other Nazi officials that meant the mass murder of all Jewish people.

Also in 1939, Himmler organized what was known as the Waffen SS, the armed division of the SS. This eventually grew to a force of 500,000 men. They existed outside of both civilian and even military jurisdiction. The SS policed themselves.

By 1944, Himmler was in charge of the Armed Forces Intelligence Service and had been given command of field divisions. However, the tides of the war were continuing to turn against Germany and Himmler eventually had word sent to US President Eisenhower that he wished to surrender. When Hitler learned of this, he stripped Himmler of all of his power. He was captured by the Soviets and given to the British but took his own life before he could be held accountable for his war crimes.

Himmler’s policies, his SS and his death camps, ultimately led to the deaths of six million Jews as well as hundreds of thousands of Romani and others.

Josef Mengele

The final Nazi on our list, Josef Mengele was a doctor who earned the nickname the Angel of Death for his sadistic experiments on behalf of the Nazi regime. Like many members of the Nazi party, Mengele had seemingly humble beginnings, the son of a man who manufactured farming equipment. He attended medical school and earned a degree in physical anthropology and also in genetic medicine. In the years leading up to the war he worked at the Institute for Hereditary Biology and Racial Hygiene. It was around this time that he joined the Nazi party, in 1937, though he had previously been involved in other paramilitary groups that would later merge with the party.

His early medical work at the institute was heavily focused on studying twins. In particular, he was focused on genetic conditions that led to cleft chins and cleft palates. In his early years with the Nazi party he was a member of the SS and was a combat medic for a time until he was injured in the Soviet Union and deemed unfit for combat. He was sent back to work for the party in a strictly medical capacity at the SS Race and Settlement office.

In 1943, Mengele was promoted to captain and transferred to a new post at the Auschwitz concentration camp. Although Mengele was one of many doctors working at the camp, his reputation grew among the prisoners and in what came from postwar revelations as well.

One of the duties of camp medical staff was to evaluate new prisoners as they arrived at the camp. The prisoners would be led onto a ramp where medical staff chose who would be pulled out to serve various work functions and who would be executed in the gas chambers right away. Typically, those who were murdered right away were those who were considered unfit for work which meant the elderly, the disabled, pregnant women, and children. Mengele was one of the doctors who administered the gas in the chambers.

Mengele performed these duties dispassionately, earning the nickname Angel of Death. Not only did he seem to have no sympathy for anyone as he selected who would live and who would die, the survivors of the camp recounted afterward that Mengele would also come to these evaluations when he wasn’t the medical officer in charge, during his off time, to see if any twins had arrived that he would specifically remove for his own work and experimentation. Survivors also reported that Mengele would sometimes smile during the selection process, or even whistle.

As a doctor in the camp, much of his work involved killing prisoners. When disease outbreaks happened, he would simply execute all the infected, clean their barracks, and then move new prisoners in. This happened with both scarlet fever and typhus outbreaks. However, this sort of medicine is not what the doctor was chiefly known for.

Mengele’s main area of interest involved human experimentation. He was very interested in research on twins and also people with heterochromia, or two different colored eyes. The research he conducted was purportedly to show the power of heredity and confirm the genetic superiority of the Aryan race. In practical terms, his research was torture.

It’s estimated that as many as 3,000 children were subjected to Mengele’s experiments. He would use one twin as the control while the other he would subject to experiments to see how it changed the subject. This could range from amputation to exposure to diseases, blood transfusions and more, resulting in the death of the twin who would then be dissected. In one single evening he killed 14 twins by injecting their hearts with chloroform.

Mengele often removed the eyes of his victims, allegedly for study. He would subject his Jewish and Roma victims to various diseases to demonstrate that they were not as able to resist disease and therefore not as pure as the German race. He was described as remorseless and sadistic by nearly everyone who had contact with him.

It’s unknown exactly how many people died as a result of Mengele’s actions but he sent hundreds of thousands to their deaths in gas chambers in addition to those he experimented on.

As the war came to a close, Mengele managed to evade capture and flee to South America. He was never brought to justice and died while swimming at a vacation resort in 1979.

Emperor Nero

He was born Lucius Domitius Ahenobarbus but is better known as Nero, the fifth Roman Emperor. He’s gone down in history for playing the fiddle Rome burned (from a fire he allegedly set) and for being a brutal killer of family and foe alike. His time was short, and he died by assisted suicide when he was just 30 after ruling for 14 years.

Adopted by Emperor Claudius and raised as his heir, Nero did contribute to the culture and the economy of Rome, but he also offended the upper classes on a routine basis. Nero enjoyed what was considered lower class hobbies. He would perform in public by singing, charioteering, and even acting. These were the sorts of things slaves traditionally did, so it was something of a scandal for an Emperor to take part. The common folk may have loved it, but others did not.

Nero became Emperor at 17. His adopted father Claudius died and historians disagree as to whether it was Nero’s mother, Claudius’s wife Agrippina, who poisoned him. Regardless, the young Emperor took control of Rome and began a number of lavish construction projects.

This is where history, especially history based almost entirely on just a scant few sources, can become murky. Nero has been painted as irresponsible and extravagant, plunging Italy into serious debt for his lavish wants. But later historians question whether this is true or if Nero was attempting to actually boost an already failing economy. It is the way historians have painted Nero as a person that makes him a standout as a cruel and evil man. Though the reputation may not be all well-deserved, these accounts are what we have to go on and if we accept them at face value, then it’s true, Nero was far from a benevolent and just ruler.

This historian Suetonius wrote that Nero would sometimes wander the streets of Rome in disguise, approaching strangers and stabbing them to death before throwing the bodies in sewers. He is alleged to have hired assassins to poison his own mother, crush her with a collapsed ceiling, or drown her in a sinking boat. In the end, he settled on faking her suicide.

Nero was married to his adopted step sister when he was 15. At 24 he divorced her, then had her banished and murdered before the killers returned her head to him. He murdered his second wife on his own, kicking her in the stomach during her pregnancy.

The range of cruelty displayed by Nero was bizarre and uncomfortable. He once had a freed slave castrated then forced the man to marry him, as the bride, in a mock ceremony. He murdered a senator because he didn’t like his facial expressions.

During the Great Fire of Rome, merchant shops were set to flame and the fire spread, burning for an entire week. There are allegations that Nero had the fires set and simply left the city to watch it burn from on high where he sang songs as he watched. Two-thirds of Rome was destroyed by the time it ended.

It’s said Nero deflected the blame for the fire off of himself and into Christians. As Nero had Christian purged in punishment, it was said that he killed the Apostles Peter and Paul by having Peter crucified upside down and Paul beheaded.

Unlike many of the other entrants on the list here, Nero’s story should at least be taken with a grain of salt. Because the history is being told by only a few writers, there is a strong belief among modern scholars that much of what we know, or think we know, about Nero is either exaggerated or even outright lies. This could simply all be propaganda designed to make a man look bad by his enemies at a time when he certainly would have had many enemies.

The truth will probably never be fully known. But if it is false, then the writers who created the lies succeeded in their goals as history has come to remember Nero, across thousands of years as a cruel and callous monster who was brutal and depraved in his actions.

It was after a rebellion that Nero fled the city to the home of a loyalist and decided to end his own life. According to accounts he was unable to perform the task himself and made one of his men kill him instead.

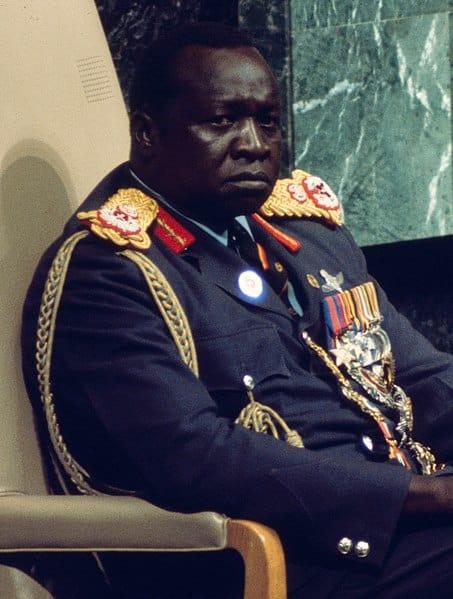

Idi Amin

Idi Amin ruled Uganda with brutality and an iron fist after serving as the Chief of Army Staff for the country and then enacting a coup d’état to seize control from the president. His reign may be one of the most terrifying in history and his violent acts are unmatched in their sheer savagery.

Amin had been a military man for years while Uganda was under British colonial rule and, in fact, started his military career as a simple cook. As he rose through the ranks, proving himself in numerous conflicts with neighboring nations such as Kenya and Somalia, he became commander by 1965, just three years after the country gained its independence. By 1970, he was Commander of the Armed Forces, ruling the entire military.

During this time, his penchant for brutality came to light as well. At one point, he and his men were to root out cattle thieves and officials in Nairobi later found that the criminals had been beaten and tortured, some of them buried alive. As the highest ranking Ugandan official in the military at the time, he was never punished for this but was instead promoted.

He was known for his physical prowess and intimidating stature. A large man at 6’4”, he had been a remarkable athlete all through his youth and excelled at many sports. However, he was also reputed to be not very intelligent, though still cunning.

Amin rose to power under president Milton Obote and for a time the two were aligned in their goals. This included smuggling and silencing any dissent among those who would speak out against them. However, Amin’s ambitions began to grow apart from Obote’s and the president grew wary as Amin was filling his ranks from the West Nile region of Uganda and supporting political causes that didn’t align with Obote’s. The president demoted Amin and took control of the military, at least in name.

When Obote left the country for a meeting in Singapore, Amin made his move. He had heard that Obote planned to arrest him upon his return, so Amin’s men took control of the capital and declared a military government. Because Obote was also a dictator and under his rule the country was marred by mass corruption and food shortages, the people welcomed Amin with open arms. It helped that Amin promised his presence was temporary until new, democratic elections could be held to choose a leader.

Within days, Amin declared himself president and instituted wide sweeping new rules. He appointed soldiers to many government positions, abandoned parts of the constitution and started to rule by decree, meaning his whims were to become law.

Amin had placed himself above the law and punished those who he felt were disloyal. When Obote supporters tried to retake the government a year after Amin took over, he had not only the soldiers purged, but everyone from their ethnic groups as well. Thousands of soldiers and civilians alike were just never seen again. Soon, Amin’s distrust turned to other ethnic groups and also individuals he felt couldn’t be trusted including journalists, students, religious leaders and more.

Amin appealed to Israel at one point for weapons to go to war with Tanzania, where former president Obote had taken refuge. Israel refused so Amin instead was aided by Libyan dictator Muammar Gaddafi. He then expelled any Israelis from Uganda as well as thousands of South Asians who had British citizenship. This move drastically damaged Uganda’s economy.

Over the course of Amin’s 8 year rule it’s believed at least 80,000 people died but Amnesty International placed the number closer to 500,000. His methods of execution grew more brutal as time went on as well. Rumors spread that he kept the severed heads of some of his enemies in refrigerators so he could view them later.

Thousands of disabled people were said to have been tossed into the Nile River to be killed by crocodiles. Most disturbing, he openly admitted to having eaten human flesh though he claimed it was “too salty” for his tastes.

Eventually, public and military opinion turned against Amin and he began to lose support. He was forced to flee the capital as it was liberated from his rule and he was overthrown. After a short stay in Libya, the Saudi Royal Family gave him sanctuary. He died there of kidney failure in 2003.

Joseph Stalin

The Soviet Union formed in Russia in 1922 when Bolshevik forces led by Vladimir Lenin overthrew the government of Russia that had taken over after the fall of the Russian Empire. Within two years, Lenin had died and Joseph Stalin became leader. Though the Union was meant to be run as a coalition, by the 1930s the other oligarchs had all been removed from power leaving Stalin as an undisputed dictator. Some historians have cited Stalin as having committed multiple genocides during his reign. Much of the debate around this is semantic as the argument is not around whether people died but if the victims can be classified in a way that meets the technical definition of genocide.

Stalin’s evil was not as single-minded as some, and he followed several paths that led to death and destruction for his enemies and innocent victims alike. He enacted a five-year plan that was meant to collectivize the agricultural industries in the Soviet Union so that they would be run by the state. To do this, many wealthy landowners were either expelled, executed or had their lands seized. Many retaliated by destroying livestock and crops. The peasants also resisted the collectivization plans and the ensuing turmoil led to massive shortages and withholding of grain and crops. The 1930-1933 famine is believed to have killed between 5 million and nearly 9 million people.

Stalin’s killing of the wealthy farmers, called kulaks, is considered by some to be not an ethnic genocide but a class one. He told Winston Churchill that at least 10 million had died by his hand. Later, his efforts would become more brutal and more explicit in terms of executions. He instituted something known as Order No. 00447, an order to put down any “anti-Soviet” elements. These broad terms opened the door for mass killings, and while thousands were arrested, by the end of the year after the order was initiated, nearly 400,000 Soviet citizens had been executed.

Later orders would be given to eliminate non-Soviet ethnic groups. This was meant to kill anyone in the Soviet Union who may have had any alternate origin including Poles, Germans, Koreans, Greeks and literally anyone not Russian by birth. Hundreds of thousands, and maybe more, died in this way.

During what was known as The Great Terror, one million people were executed and estimates suggest between four and six million were sent to gulags, or forced labor camps. One million of those are thought to have died there. They were either worked to death, starved to death, or even just executed by the guards. Few reliable records were kept so there is no way to ever know for sure. That said, there are reports that the gulags would sometimes round up prisoners who seemed on the brink of death and then release them knowing they were going to die, so they could lower their official mortality rates.

The total number of deaths that can be attributed to Joseph Stalin is not a number set in stone. There are figures from before the fall of the Soviet Union that said up to 20 million, and then numbers that came after based on Soviet records that are down around 6 million. However, some sources include the famine deaths while others do not as some don’t consider them deaths that were intentional. Regardless of Stalin’s motives, people certainly did die, and the numbers were in the millions.

Shiro Ishii

While the atrocities of the Nazi Party during WWII can’t be overstated, another group should be remembered as well – Japan’s Unit 731 led by microbiologist Shiro Ishii. He has been compared to Joseph Mengele and the things he did were nightmarish at best.

Ishii was born to a wealthy family and was said to be an incredibly intelligent student as a child. Some called him arrogant and given what he was responsible for, it’s not hard to imagine that being true. Later, at medical school, it was said he didn’t get along with anyone as they found him pushy.

After medical school, Ishii joined the Imperial Army and continued to work on his medical studies, attaining a postgraduate degree. It was at this time he began experimenting with growing bacteria just for fun. He attained the rank of Army Surgeon, First Class and was soon promoting the idea of Japan developing a biological weapons program.

By 1935 he was a lieutenant-colonel and, with prominent high-ranking support, was put in charge of Unit 731. This secret military group was the biological and chemical weapons development wing of the Japanese military. Their work involved human experimentation.

The range of horrors committed by Unit 731 reads like something that can’t possibly be real. Subjects were typically Chinese though some Russian as well, kidnapped and forced to endure the Unit’s experiments. Men, women including pregnant women, and even children were experimented on. They were injected with deadly diseases so the scientists could watch the results. He would inject typhoid into dumplings then follow the disease’s spread. He gave anthrax-laced chocolate to children in nearby towns to see how it would progress, or airdrop disease-laden supplies in towns to see how long it took to kill the whole population.

Other experiments involved freezing prisoner’s limbs until frostbite set in, then removing the limb to see how best to treat the condition, hyperbaric chambers used to determine how much pressure a human could withstand until they died, amputations without anesthetic, vivisection, forced dehydration and much more. There was also rampant sexual assault of the prisoners resulting in babies that were then used in experiments.

Ishii had developed plague bombs, ceramic or glass containers that could be dropped over a populated area that were full of fleas. The fleas were all infected with the Black Plague and would immediately disperse after their container broke open. These were tested out over China and there were plans to use them in the United States.

Everything Ishii did, all the death and torture, was claimed to be for a practical purpose. He wanted to simulate “real world” scenarios and learn how they could be dealt with to save Japanese soldiers who may suffer the same fate. They tortured and killed thousands, estimates range from 200,000 to 300,000, towards this end.

The US eventually captured Ishii and made a deal with him for immunity. He was not prosecuted, paid 250,000 yen, and in return he shared much, but not all, of the information he had with US forces. Ishii ended up dying of cancer at age 67.

Ivan the Terrible

Born Ivan IV Vasilyevich, Ivan the Terrible was Tsar of Russia from 1547 to 1584. The fact he was called “Terrible” was not technically because he was evil, however. The translation was more to indicate someone powerful or formidable. He was the first tsar of Russia and his claim to power was made by force. He was said to be highly intelligent but also feared for his incredible and uncontrollable bursts of anger. He is said to have unintentionally murdered his son during a rage-fueled argument which also resulted in his daughter-in-law having a miscarriage.

His rise to power included conquering vast expanses of land while also suppressing those who might challenge his power by either seizing what they owned or executing them or both. Under his reign he oversaw the Massacre of Novgorod which resulted in somewhere between 25,000 and 60,000 deaths at the hands of his private army, the oprichniki.

The oprichniki were given total authority by Ivan to kill and torture anyone he suspected of betrayal. They were said to ride on horseback with severed dog’s heads hanging from their saddles, a metaphor for their ability to sniff out betrayers. Anyone suspected of being a traitor could look forward to a variety of cruel and painful deaths. These including impalings, being boiled alive, being drawn and quartered or roasted over a fire.

Ivan was known to be paranoid, and he feared that the nobility of Novgorod would betray him as well. He accused the people there of treason and sent in his soldiers who destroyed outlying farmland along the way. He believed the church had been at the forefront of the treason to defect from Russia so he had the local clergy beaten and their churches and monasteries looted. Later, many would be cooked alive.

Merchants and upper class citizens were tortured and burned for information and things escalated from there. Women and children were bound and thrown in the frozen river. If any seemed like they might escape, soldiers with poles forced them back under water.

The soldiers took anything of value from the city and were told to kill anyone who resisted. All poor people, many who had fled Ivan’s soldiers, were ordered out of the city in the middle of the winter. In the aftermath, with no possessions and no viable farmland, many people starved to death or died of exposure.

Ivan himself lived on for some years, dying unexpectedly of a stroke in the middle of a game of chess at the age of 53.

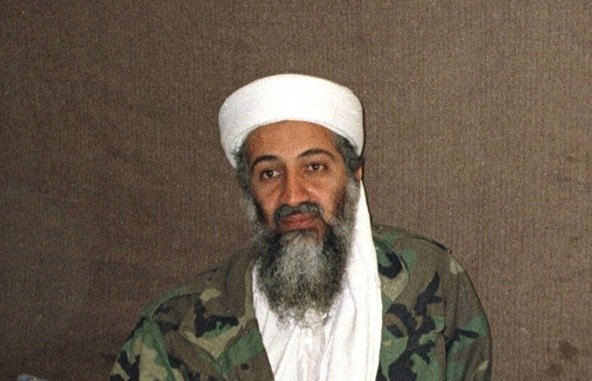

Osama bin Laden

Few names can conjure an image of fear and hatred quite like the name Osama bin Laden. The onetime leader of the terrorist group known as al-Qaeda, bin Laden masterminded the September 11, 2001 attacks against the United States of America that claimed 2,996 lives.

While many of history’s greatest monsters are often touted for the sheer magnitude of the effect, they had on a population, often people under their rule, bin Laden holds a unique place for the calculated cruelty of his attack. His was not a thinly veiled attempt to gain control over a population or to eradicate political or religious enemies. His attack was meant to kill innocent civilians in a place far removed from himself, his people and his influence specifically to terrorize and hurt not his victims but the place from which they came. It’s been said that bin Laden strongly believed civilian targets, including women and children, were reasonable and justifiable targets.

While most of us remember bin Laden from images of a bearded and disheveled man, he was actually the son of a billionaire and quite wealthy himself. It’s believed he inherited at least $25 million from his father.

The attacks bin Laden orchestrated have been linked to the man’s belief that United States foreign policy had oppressed and harmed many Muslims in the Middle East. Although he claimed it was more what the US did rather than who Americans were, he was also vocally opposed to many aspects of American life and its freedoms.

In the late 70s and 80s, groups associated with bin Laden were supported by the US government and the CIA during the Soviet-Afghan War, though there is some debate over how much direct financial or training support he may have received. Regardless, when the first war in Iraq broke out, bin Laden turned his eye to America, angered that a foreign nation was being supported by Saudi Arabia in the war.

Through the 90s, bin Laden’s involvement in international terrorism efforts increased. He was linked to a number of plots and his ire with America was only increasing as the country’s war in Iraq drew out for many years.

On September 11th, 2001, the world watched as two planes crashed into New York’s World Trade Center, while another hit the Pentagon and a fourth crashed in a field, taken down by the bravery of the passengers on board who fought back.

Osama bin Laden initially denied having anything to do with the attacks but later took credit. Thus began one of the most infamous manhunts in the history of the world and the start of America’s War on Terror.

It would not be until May 2, 2011, nearly a decade after the attacks, that a SEAL Team tracked down bin Laden in Pakistan and killed him.

Francisco Pizarro

The lore surrounding the discovery of the “New World” often, to this day, leaves out many of the atrocities committed against the people who already lived in the Americas when Europeans arrived. While Christopher Columbus has only recently really been taken to task for his involvement, many others are overlooked for their brutality. One such man was Francisco Pizarro.

The Spanish conquistador lived from 1471 to 1541 and in that time he laid waste to the empire of the Inca people. Only a peasant himself, Pizarro had no formal education and was simply searching for more. More gold and more wealth at any cost. Under his command, Spanish soldiers conquered the country of Peru and took the head from Inca emperor Atahualpa before stealing his people’s gold.

Atahualpa had been invited to meet with Pizarro before he was even crowned emperor. He agreed to meet and there is some evidence both parties planned to doublecross the other, but only Pizarro succeeded. He took the emperor hostage and Atahualpa paid for his own release only to be executed shortly thereafter, anyway.

For 15 years, Pizarro and his crew laid waste to the people they found, killing thousands if not more and taking everything of value. The entire Inca monarchy fell, and the empire was destroyed utterly at this Spaniard’s hands. He had, in effect, committed genocide.

Genghis Khan

You could make a strong case that no one else has had a more profound impact on the entire world than Genghis Khan. The one time emperor of Mongolia lived from 1162 to 1227 and the number of deaths attributed to him can never be accurately calculated but estimates range from several million on the low end to as many as 60 million. There is also evidence that about 0.5% of the present day male population can be genetically linked to him, which works out to around 16 million men.

Born as Temujin, he would go on to rule over an empire that would not be rivaled for over 700 years until the British Empire, who had a good number of advancements over what the Mongols had at their disposal under Genghis Khan’s rule.

Khan united Mongolian tribes and spread the Mongol empire over 12 million square miles. He was known for having a skilled military mind and superior tactics against many of the enemy forces he met. He was also known to appreciate talent rather than fear it and, when he conquered people, he would fold those who impressed him into his ranks.

In one such tale, Khan was nearly killed in battle when an enemy soldier shot at the man’s horse, killing it and forcing the man to the ground. After the battle was won by the Mongolian forces, Genghis Khan demanded to know who it was that had shot his horse.

One of the enemy soldiers admitted he had been the one to kill the horse and, rather than take vengeance on the man, Genghis Khan made the man an officer in his own army. In this way he was able to use the talents and skills of the conquered people to better his own forces and most of these soldiers proved to be incredibly loyal.

Often as he spread his Empire outward, he would give new nations the chance to surrender, but his patience was limited. Those who spurned his offer would be slaughtered completely, as would anyone who aided them and even those who refused to aid the Mongols in their conquest.

When Khan proposed that the Khwarezmid Empire surrender, they killed his emissaries. The Mongol Horde was unleashed, and the empire was scoured from Persia. Millions died as a result. In the city of Merv, between 700,000 and 1.3 million died while Khan watched. Stories say he ordered each of his men to kill at least 300 people.

It’s believed the Mongol forces killed an equivalent of three-quarters of the population of modern day Iran, as well as tens of millions of Chinese. Some scholars have suggested that 11% of the entire world population died by Genghis Khan’s orders.

In addition to killing his many enemies, as the number of genetic descendents shows, the Mongol armies believed that taking women was a reward of battle. Khan himself would choose women to add to his ever-growing harem in each new conquered land. It’s been said that he would claim the wives and daughters of fallen kings and rulers in particular. Some estimates suggest he fathered as many as 1,000 children.

Khan died sometime in his 60s though the exact cause is unknown and very much disputed. Numerous rumors exist which suggest he died of an illness, wounds from battle, or even during a hunting accident. One story even suggests he was castrated by the wife of a prince he had killed. Khan’s tomb has never been found so no modern science is available to shed light on the true cause of his demise.

Vlad the Impaler

Of all the people on this list, only one has gone down in history as a literal monster. Vlad III, otherwise known as Vlad the Impaler and Vlad Dracula, has been linked with Bram Stoker’s fictional vampire since the book was published in 1897. However, the name Dracula, taken from Vlad II, the Impaler’s father, was a nickname that meant “Son of the Dragon.” His father’s nickname, Vlad Dracul, meant “Vlad the Dragon,” a name given after he joined the Order of the Dragon.

The reason Stoker used the name Dracula seems to have little bearing on the true Vlad, about whom Stoker knew little. If he had, he might have made his fictional vampire even more bloodthirsty.

Vlad was born in Transylvania in 1431. His notorious nickname and reputation are born from his penchant for having his victims impaled on wooden spikes and left to rot in fields or along roads. It’s been said he had so many people impaled that the corpse-topped spikes would look like a forest from a distance.

After Vlad’s father was killed, the Impaler only served a short time on the throne before being usurped and fleeing. He plotted to return however and seized the chance some years later. Once in power, his work was cut out for him as his country had fallen into severe poverty and disrepair. Crime was rampant and the blame, in Vlad’s eyes, was on the aristocracy who not only continued to profit but had been complicit in the death of his father.

Vlad turned on those in power and had them executed, beginning his reign as the impaler. He promoted people loyal to him, not aristocrats but soldiers and even free peasants. Those who violated his new rules as he set out to build the country back to its former glory, including aristocrats, met the spikes.

When the Pope declared war on the Ottomans, Vlad backed the Crusade against the Turks, who had taken the throne from his father in the first place. He waged campaigns against Turkish forces and it’s said he impaled entire enemy armies. Because he was fluent in Turkish, he was able to sneak into enemy territory by pretending to be an ally and then slaughter them en masse with his forces. More than 23,000 enemies were set upon spikes. In a letter to the commander of the Crusade he claimed 23,884 not including those his forces may have burned or those whose heads were cut off.

In later battles, 35,000 men fell to Vlad’s forces. The Ottoman Sultan was said to have retreated from Vlad to what he thought was a safe haven only to find 20,000 of his men on spikes when he arrived.

Though Vlad was formidable in war, he was betrayed by Matthias Corvinus; the man leading the Crusade, who had stolen funds from the Pope. Vlad was imprisoned and blamed for the theft, framed with forged documents.

Vlad would later be freed, and he attempted to regain control of his country once more, but this would be short-lived as he was soon beheaded. Ironically, though the man’s legacy has been tainted by vampire fiction, many from his homeland still regard him as a hero rather than a villain for his efforts to save the country.

You May Also Like

How Many Teeth Do Adults Have?

December 28, 2022

10 Vicious Serial Killers from Ohio

October 24, 2018